The Mind of a Machine: How AI Understands Language

Where does the line between AI’s patterns and human intent blur, creating the illusion of true understanding?

Technical concepts are often locked behind complicated linguistic structures, making the clarity at their core exhausting to reach. This is troubling, as a lack of understanding often leads to misinterpretation and confusion—both of which can be wielded as conceptual weapons.

This is especially true in the AI space, where the need for transparent, educational content approaches has never been more critical.

Below, we'll explore how a technical explanation can be simplified to give readers a solid grasp of a topic that may have seemed complex at first. That’s the aim, at least.

Success relies on a faithful approach to key terms, simpler sentence structures and wording, a more conversational tone, gentle repetition, reinforcing phrases, and practical examples. We can also play around with visual techniques to make the text more accessible.

To really engage with the challenge, try reading the original text below and think about how difficult it was to understand. Notice how much focus you needed to grasp the message. Then, do the same with the reworked version.

1. How AI Processes Language

Artificial intelligence (AI) processes language through a field known as natural language processing (NLP), which combines linguistics, computer science, and machine learning to enable machines to understand, generate, and respond to human language.

At its core, NLP involves converting unstructured language into structured data that machines can interpret. This begins with tokenization, where text is split into smaller units (words, subwords, or characters).

These tokens are then transformed into numerical representations—typically using embeddings like word2vec or transformer-based contextual embeddings, such as those produced by BERT or GPT models.

Modern AI language models, like GPT-4, are based on transformer architectures. Transformers process language by attending to relationships between words in a sentence, regardless of their distance from one another.

This mechanism, called self-attention, allows models to weigh the importance of each word in context. For example, in the sentence "The cat sat on the mat, and it purred," the model learns that "it" likely refers to "the cat"—a task known as coreference resolution.

These models are trained on vast corpora of text, learning statistical patterns in how words co-occur. During training, the AI predicts the next word in a sentence, adjusting its internal parameters (weights) based on how accurate its predictions are. Over time, it builds a nuanced understanding of syntax, semantics, and even tone.

However, AI doesn’t “understand” language in the way humans do. It lacks consciousness or intent—it processes language based on probability and learned patterns. This can lead to impressively coherent responses but also factual errors or unintended bias, especially if the training data reflects societal biases.

In summary, AI processes language through a blend of pattern recognition, probability, and context-aware modelling using complex neural networks. While it can mimic understanding, its "knowledge" is statistical, not conscious.

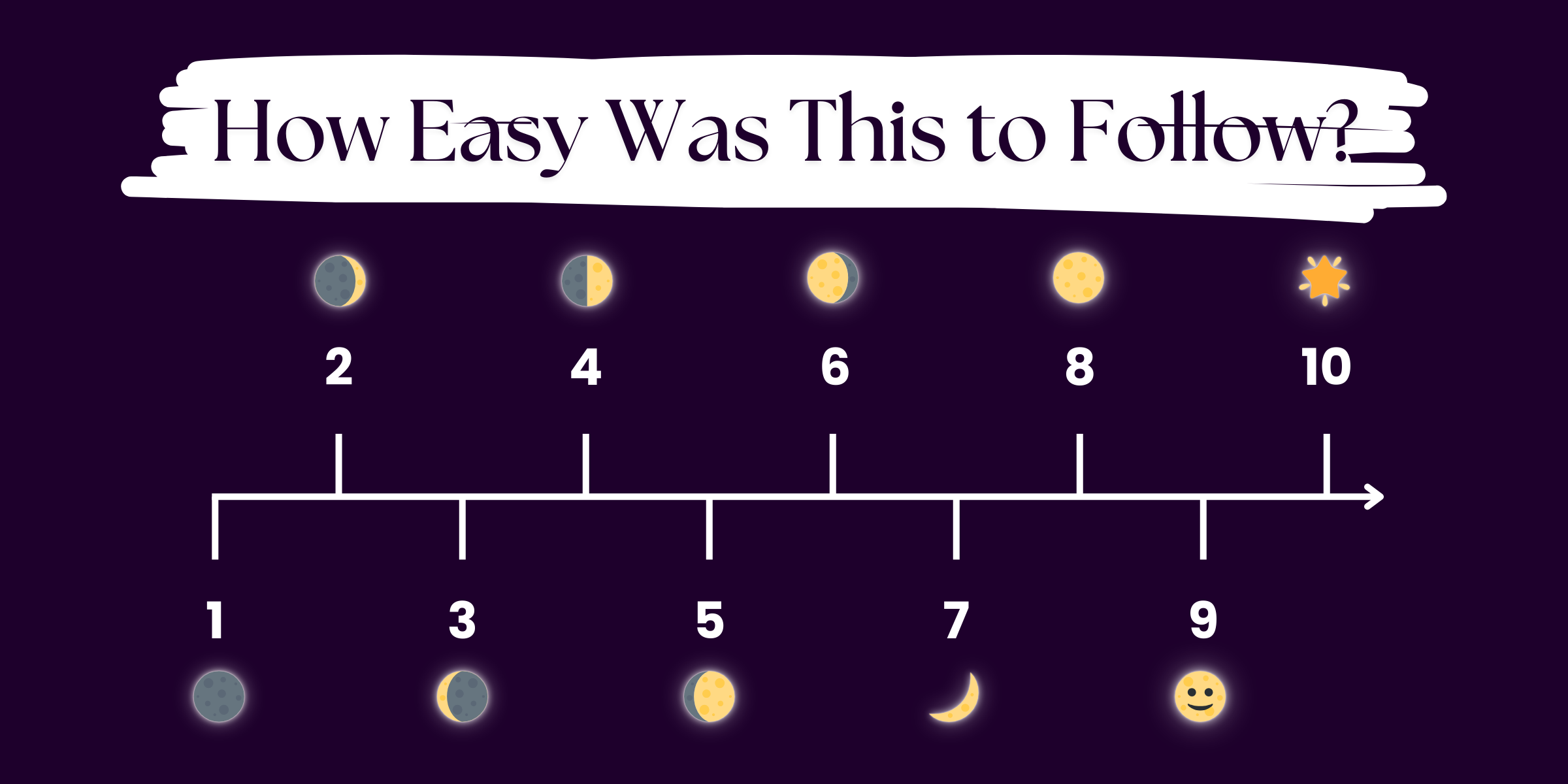

Clarity Meter

Could you explain how AI processes language to a friend?

2. How AI Reads and Responds to Language

Artificial intelligence (AI) processes language through a field called natural language processing (NLP), which blends linguistics, computer science, and machine learning to allow machines to understand, generate, and respond to human language.

At its core, NLP involves turning unstructured language into structured data that machines can later interpret. The process starts with tokenization, during which text is divided into smaller units (words, subwords, or characters).

These tokens are then converted into numerical representations, often using embeddings like word2vec or transformer-based contextual embeddings. BERT and GPT models use such embeddings.

Modern AI language models, like GPT-4, are based on transformer architectures. Transformers process language by analyzing the relationships between words in a sentence. The distance between the words isn’t important.

This mechanism is called self-attention, and it allows models to weigh the importance of each word in context. For example, in the sentence “The cat sat on the mat, and it purred,” the model learns that “it” likely refers to “the cat.” This task is called coreference resolution.

Language models learn from large amounts of text data, examining how patterns in sentences are formed. Specifically, during training, they predict the next word in a sentence.

Depending on how accurate its predictions are, a model adjusts its internal parameters (weights). Over time, it builds a nuanced understanding of syntax, semantics, and even tone.

However, it’s important to note that AI doesn’t “understand” language the way we do. It doesn’t possess consciousness or intent. It processes language based on learned patterns and statistical probability, which is why its ability to handle context, layered language, and storytelling techniques can feel limited.

Naturally, AI's reliance on data patterns can lead to very impressive and logical responses, but also mistakes or biases that were unintentionally learned. This is especially true if the training data reflects societal biases, from gender and geopolitical discrimination to other systemic prejudices.

To sum up, AI processes language through a blend of pattern recognition, probability, and context-aware modeling. It does so using complex neural networks, which loosely mirror the neurons in the brain. But while it can convincingly mimic understanding, its “knowledge” is statistical—not conscious.